Before a senior R&D engineer at a mid-size SaaS company let an AI agent touch his live Jira data, he built a workaround. He ran the agent in a parallel mode where instead of updating tickets directly, it would write its proposed changes to a Confluence page he created. He could review what the agent planned to do before it did anything.

The system worked. The agent’s suggestions were good. Eventually he trusted it with production access.

But here is the part worth sitting with: he spent meaningful engineering time building a shadow system to validate a tool that was supposed to save engineering time. The caution was rational. The overhead was real.

How the permission gap forms

The permission gap is not a trust problem in the abstract. It is a structural problem with a specific cause.

When AI agent mistakes are permanent, restricting agent access is the correct response to risk. Teams limit what agents can modify, require human approval at every step, and treat automation with the same caution they would give to a junior engineer on their first day. The logic is sound.

The result is that AI agents operate well below their capability ceiling. They can do more than teams let them do, because what they do cannot be undone.

What the gap actually costs

The productivity case for AI agents rests on a specific assumption: that agents can act with enough autonomy to actually save time. When that autonomy is capped by permanent-mistake risk, the math stops working.

Consider the code review problem. An engineering leader described it plainly: generating code is no longer the slow part of the development cycle. Reviewing it is. AI tools can now produce code faster than human engineers can evaluate it. Humans have become the bottleneck. Teams that have adopted AI for generation but not for review are running the same pipeline they had before, just with a faster input stage.

The same pattern shows up in every workflow where AI agents touch live data. Rovo can classify Jira tickets. It can update documentation in Confluence. It can automate the operational overhead that consumes hours of engineering time every week. But each of those capabilities comes with a restriction layer, because the cost of a mistake is a permanent data state that no one can walk back.

The paradox underneath the gap

The permission gap looks like a technology adoption problem. Teams are not using AI to its full potential. The fix seems obvious: train people, build confidence, show examples.

That framing misses the root cause.

Teams are not being cautious because they do not understand AI. They are being cautious because they understand the risk precisely. AI actions are irreversible. Restricted access is a rational response to irreversible risk.

The gap will not close through training or encouragement. It will close when the risk profile changes.

What changes the risk profile

If a mistake can be recovered, the rational response to AI is not restriction. It is confidence.

This is not a hypothetical position. It is a direct consequence of how risk calculus works. Teams do not restrict human engineers because humans make mistakes. They restrict AI agents because AI agent mistakes cannot be undone. Change the second condition, and the restriction loses its justification.

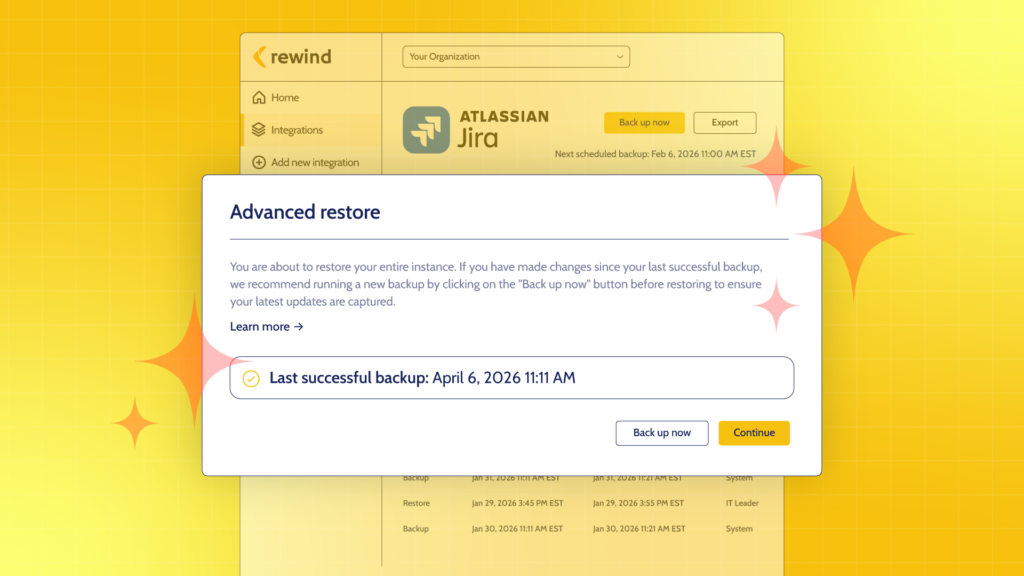

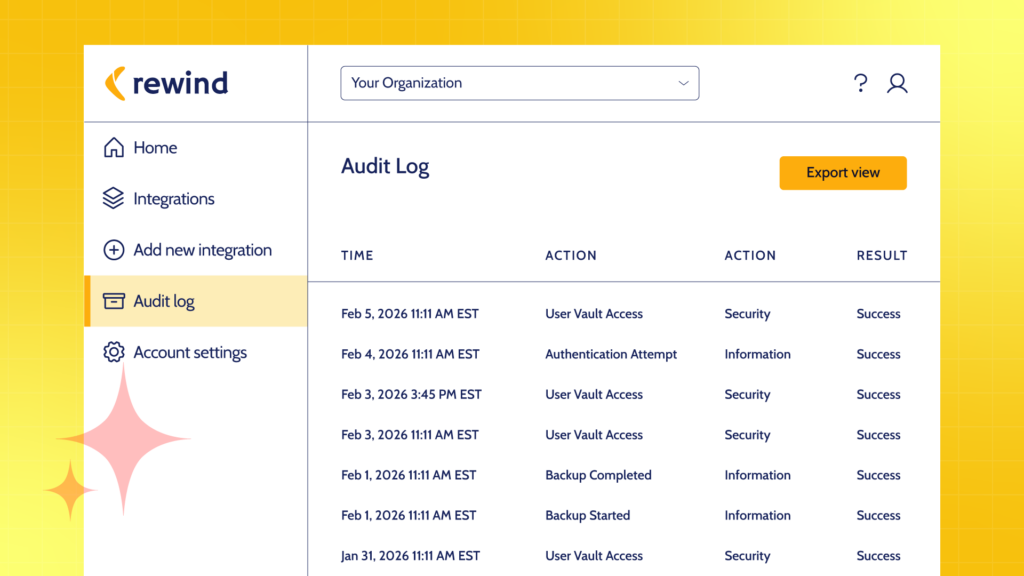

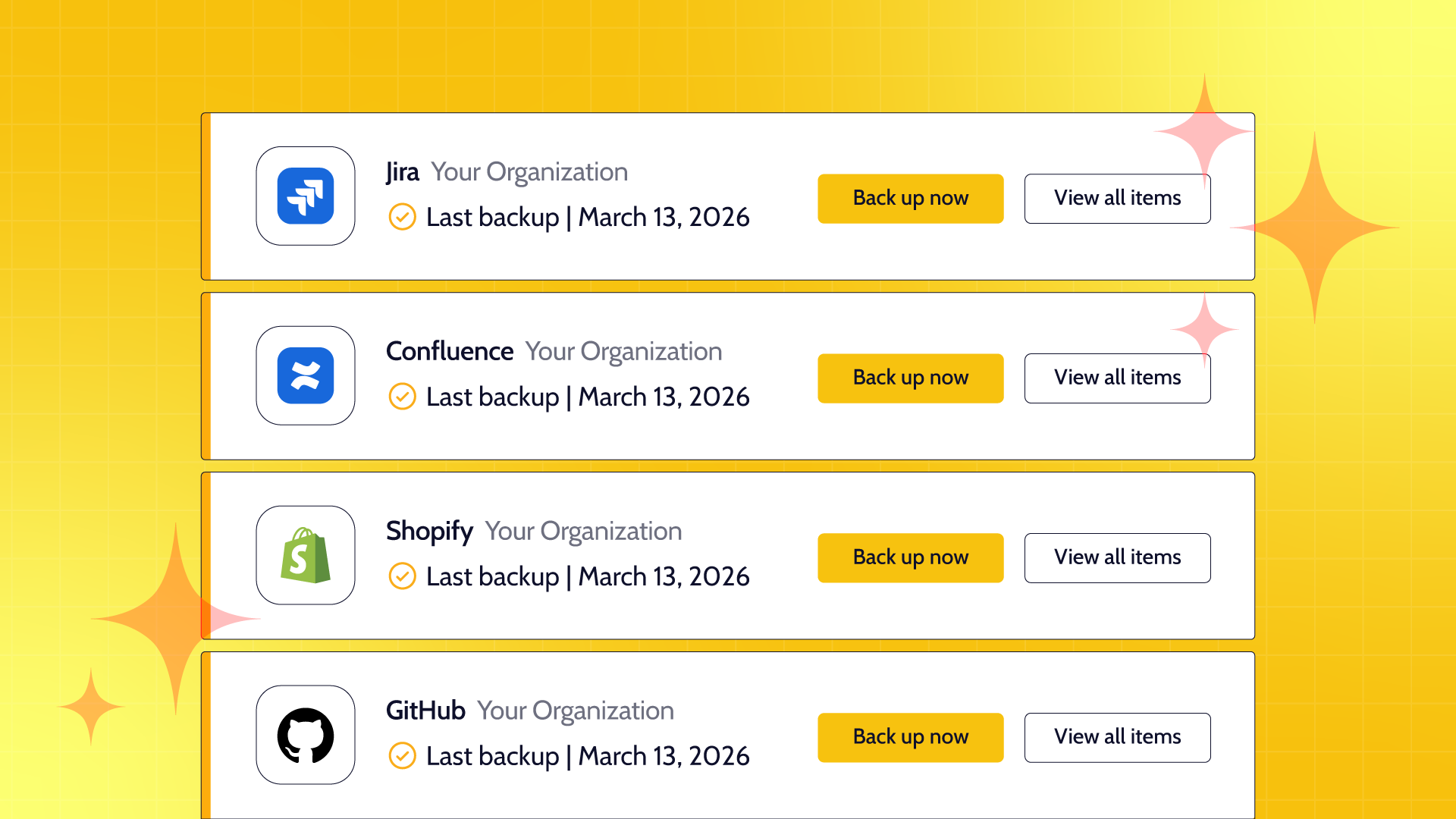

Rewind provides point-in-time recovery across the platforms AI agents work in. When a Rovo agent misclassifies a set of Jira tickets, the correct state can be restored. When an AI-generated Confluence page overwrites documentation that should have been preserved, it can be recovered. When Claude Code pushes changes to a GitHub repo that break something unexpected, the before-state is always there.

The permission gap closes when teams know they can recover. Not reconstruct from memory. Not audit logs manually to figure out what changed. Actually restore.

The teams that get this right

Engineering teams that have closed the permission gap share a common characteristic. They did not do it by becoming more tolerant of risk. They did it by reducing the cost of a mistake to something manageable.

They give AI agents broader access because they know the downside is bounded. They review less and ship more because confidence is a recoverable asset. They are not playing defense against AI. They are using it.

That is what taking the limits off actually means. Not abandoning caution. Replacing the structural condition that makes caution the only rational response.

Rewind provides schema-aware, cross-platform backup and point-in-time recovery for the SaaS tools engineering teams depend on, including Jira, Confluence, and GitHub. More than 25,000 organizations worldwide trust Rewind to keep their teams shipping.

Rewind">

Rewind">