We’ve written in a previous blog post how Terraform helps us manage a lot of infrastructure for several platforms in a consistent manner. Recently we’ve been able to develop an automated workflow for actually applying our Terraform configuration to environments with full review and approval baked in. How? Github actions.

Github Actions

Github actions has been generally available since November 2019 and we had already jumped on board for a number of key tasks:

- Automating code style

- Releasing of private Ruby Gems

- rspec testing

- … and more

Towards the end of 2019, I became familar with the standardized Github actions published by HashiCorp for Terraform. These looked like something we could model our workflow on at Rewind. As we developed our workflow, there were a few bumps along the way that I’ll try and highlight in this post.

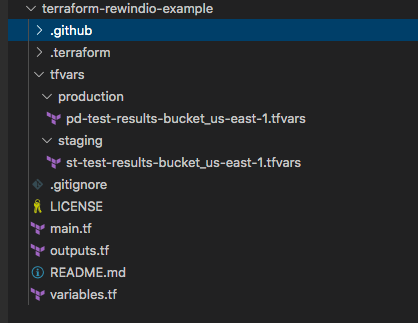

A small example repository to accompany this post is at rewindio/terraform-rewindio-example

Terraform Setup

At Rewind, we have several terraform repositories for different pieces of infrastructure. I’ve covered some of the layout in detail in this past post but in general, all of our repositories follow a similar layout that looks something like this

A real repository has more .tf files and modules but the general structure is similar. The key thing is how we layout the .tfvars sub-directory structure and how we name workspaces.

There are separate AWS accounts for staging and production (a fairly common setup). Each account and region within that account requires it’s own .tfvars file containing the account-region specific configuration.

Further, each .tfvars file is tied to it’s own Terraform workspace which is named using the same convention as the .tfvars file. Simply, we use

<account prefix><some descriptive text>_<aws region>

so st-test-results-bucket_us-east-1 is in the staging account, probably has something to do with test results and it’s in the us-east-1 region. All of our terraform templates parse the workspace name and pull out the region (one less thing to configure).

This standardized naming convention will be important when we show how the Github actions work below.

Github Secrets

Github secrets allow us to store senstive values with encryption yet still access them from within Github actions. For our Terraform workflow, we need the following secrets defined:

- deploy_user_PAT — a Github access token for a user with read access to repos. This is required if your Terraform templates need access to private modules or you’re using a shared repo to store the state location

- AWS_ACCESS_KEY_ID_STAGING / AWS_SECRET_ACCESS_KEY_STAGING — AWS credentials used to access the staging account

- AWS_ACCESS_KEY_ID_PRODUCTION / AWS_SECRET_ACCESS_KEY_PRODUCTION — AWS credentials used to access the production account

Github secrets are managed on a per-repo basis so if you have a few repos, it can become a challenge to manage these. We created the Github Secrets Manager tool to make this easier across repos. Since this article was originally published, secrets can now also be managed at the organization level.

Terraform Github Actions

We’re using a fork of the official terraform Github actions that adds in 2 pieces of functionality. One of these already has a pending PR from Alex Jurkiewicz and the other we have submitted a PR for ourselves. The added functionality in our fork is:

- Always save the full plan output as an artifact with the Github actions job. Plans greater than 64K are truncated due to limits in Github PR comments

- Allow Terraform apply output to be posted to the PR comments when invoked as part of a comment on a PR

In both the plan and apply workflows we will outline below, we use the matrix strategy for jobs which allows the workflow to dynamically generate jobs and run them in parallel.

Let’s walk through the details of the plan and apply workflows.

Terraform Plan Workflow

The plan workflow is stored under .github/workflows/tf-plan.yaml and invoked whenever a new pull request is created.

Breaking down the jobs section with examples where warranted. Refer to the example repo in Guthub for the full workflow:

- Use the matrix strategy. This will give us one job per entry and create a variable called workspace we can accessed using

${{ matrix.workspace }}

terraform-plan:

strategy:

matrix:

workspace: [st-test-results-bucket_us-east-1,

pd-test-results-bucket_us-east-1]- Generate the path to the .tfvars file to use depending on the name of the workspace. This is where a strong, consistent naming strategy really helps when automating process. This step gives us an output variable with path to the .tfvars file for the workspace which can be accessed using

${{ steps.tfvars.outputs.tfvars_file }}- It’s important when generating outputs that you use the id field in the step so the output can be referenced

- name: Generate tfvars Path

id: tfvars

run: |

if [[ "${WORKSPACE}" == "st"* ]]; then

echo "::set-output name=tfvars_file::tfvars/staging/${WORKSPACE}.tfvars"

elif [[ "${WORKSPACE}" == "pd"* ]]; then

echo "::set-output name=tfvars_file::tfvars/production/${WORKSPACE}.tfvars"

else

echo "::set-output name=tfvars_file::UNKNOWN"

fi- Checkout the code in the repo. Usually this is a straightforward step in a workflow and not worth mentioning. However in this case, note we use the access token set in the repo secrets instead of the usual

${{ secrets.GITHUB_TOKEN }}. This is because the standard GITHUB_TOKEN secret only has access to this repo. If you’re using private Terraform modules in other repos, you will not be able to pull them during the init step. You will also need to use a token if you are using git submodules (which only v1.0.0 of the checkout action supports — submodule support has been incredibly removed from v2.0.0)

- name: Checkout

uses: actions/checkout@1.0.0

with:

submodules: 'true'

token: ${{ secrets.deploy_user_PAT }}- Setup the AWS credentials file. In our case, we drive everything off a named profile (namely, staging or production) rather than setting the keys in the envrionment. This step just creates the named profiles. A final step will remove the profiles and associated credentials

- The next 3 steps run a format/init and validate and follow the recommended workflow documented by HashiCorp. The steps are pretty straightforward

- The actual plan step! Remember that this is running as one of the auto-generated matrix jobs where the workspace is parameterized for us. We can pass the workspace information and the path to the .tfvars file which we generated earlier and thus generate a plan for the current workspace job

- name: Terraform Plan

id: terraform-plan

uses: rewindio/terraform-github-actions@master

with:

tf_actions_version: ${{ env.TF_VERSION }}

tf_actions_subcommand: plan

args: -var-file backend/backend.tfvars -var-file ${{ steps.tfvars.outputs.tfvars_file }}

env:

TF_WORKSPACE: ${{ env.WORKSPACE }}

AWS_SHARED_CREDENTIALS_FILE: .aws/credentials

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}- Get the full plan output. As mentioned above, the plan is truncated to 64K due to limits in the amount of data that can be added as comment. We found that most of our plans ran over this limit because we use AWS Elastic Beanstalk which generates a huge amount of output even if only a single parameter changes. For this reason, we forked the official Hashicorp actions and produce the full plan output in a file. This can then be produced as an artifact of the job

- name: Upload Plan Artifact

uses: actions/upload-artifact@v1

with:

name: terraform-plan-${{ env.WORKSPACE }}

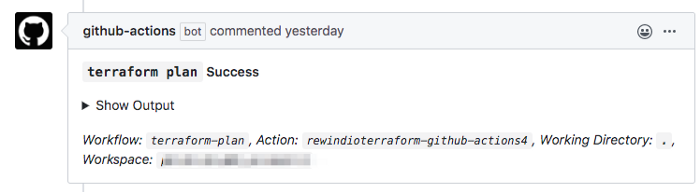

path: ${{ steps.terraform-plan.outputs.tf_actions_plan_output_file }}That’s the plan workflow. If all works well, you will end up with a comment to the pull request that looks like this:

Terraform Apply Workflow

The apply workflow is a little different in that it is triggered by a comment on the pull request itself. This raised 3problems:

- For the purpose of comments, pull requests are considered issues in Github.

- Comments on issues always reference the head of a repo rather than the branch associated with the PR.

- The HashiCorp github actions always assumed they were being called from a pull request create or merge. Commenting back to the pull request did not work if triggered from a comment.

The second point answered a long standing question I had when using Github actions as to why my workflow sometimes used the yaml file in the master branch rather than the one I was changing!

In looking into all of these, I found this open pull request from Alex Jurkiewicz which essentially solved all of this. After forking the official repo and merging Alex’s great changes, here’s the main pieces of our apply workflow (again, see the example repo for the full workflow)

- Only trigger on an issue comment

on: issue_comment: types: [created]

- Only run the workflow jobs if the comment is prefixed with terraform apply AND this is a comment on a pull request, not a regular issue

jobs:

terraform-apply:

# Only run for comments starting with "terraform " in a pull request.

if: >

startsWith(github.event.comment.body, 'terraform apply') &&

startsWith(github.event.issue.pull_request.url, 'https://')- Use our matrix strategy again

strategy: matrix: workspace: [st-test-results-bucket_us-east-1, pd-test-results-bucket_us-east-1]

- Generate the path to the .tfvars file. This is the same as in the plan workflow with one addition — an output is set called

env_nameindicatingstagingorproduction. Later we can use this for applying to all workspaces or only staging or only production. - Load the PR details. This is the brilliant step developed by Alex to pull both the head SHA and the URL for the pull request comments from the incoming event. Recall, comments on pull requests have the SHA set to the head of the repo by default

- name: 'Load PR Details'

id: load-pr

run: |

set -eu

resp=$(curl -sSf

--url ${{ github.event.issue.pull_request.url }}

--header 'authorization: Bearer ${{ secrets.GITHUB_TOKEN }}'

--header 'content-type: application/json')

sha=$(jq -r '.head.sha' <<< "$resp")

echo "::set-output name=head_sha::$sha"

comments_url=$(jq -r '.comments_url' <<< "$resp")

echo "::set-output name=comments_url::$comments_url"- Determine which workspaces we should apply. The apply workflow allows the user to apply to all workspaces, a specific workspace, all staging or all production workflows. This step uses the github-script step to set a boolean variable for whether the next step should execute or not. This does not need to be a switch..case but was made so to extend in future for further commands. The key part here is the setting of an output

( do_apply )which we can check in subsequent workflow steps.

- name: Determine Command

id: determine-command

uses: actions/github-script@0.2.0

env:

ENV_NAME: ${{ steps.tfvars.outputs.env_name }}

with:

github-token: ${{github.token}}

script: |

// console.log(context)

const body = context.payload.comment.body.toLowerCase().trim()

console.log("Detected PR comment: " + body)

console.log("This job is for workspace " + process.env.WORKSPACE)

commandArray = body.split(/s+/)

if (commandArray[0] == "terraform") {

action = commandArray[1]

switch(action) {

case "apply":

if(typeof commandArray[2] === 'undefined') {

console.log("::set-output name=do_apply::true")

} else if (commandArray[2] == process.env.WORKSPACE) {

console.log("::set-output name=do_apply::true")

} else if (commandArray[2] == process.env.ENV_NAME) {

console.log("::set-output name=do_apply::true")

} else {

console.log("apply command is not for this job")

}

break

}

}- Checkout the code from the repo. Usually the step is so simple as not to mention but there are 3 important changes here:

- using an

ifcondition to only run this step if the previous step determined we are doing an apply for this workspace. This is also present in all subsequent steps. - Referencing the code for the branch associated with this pull request using the

refoption - As with plan, we use an access token set as a secret for the token parameter

- name: Checkout

if: steps.determine-command.outputs.do_apply == 'true'

uses: actions/checkout@1.0.0

with:

ref: ${{ steps.load-pr.outputs.head_sha }}

submodules: 'true'

token: ${{ secrets.deploy_user_PAT }}- Initialize Terraform. Here we have the same

ifcondition as with the checkout to only initialize when needed. We can use the regular GITHUB token here as the action only uses it to comment back to thispull request

- name: Terraform Init

uses: rewindio/terraform-github-actions@master

if: steps.determine-command.outputs.do_apply == 'true'

with:

tf_actions_version: ${{ env.TF_VERSION }}

tf_actions_subcommand: init

args: -backend-config backend/backend.tfvars

env:

TF_WORKSPACE: ${{ env.WORKSPACE }}

AWS_SHARED_CREDENTIALS_FILE: .aws/credentials

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}- Apply! The step we finally want to get to. Let’s look at the interesting parts

tf_actions_comment_url— this is the new parameter added to allow the apply command output to be commented back to the pull request. The URL itself comes from the load-pr stepargs— the .tfvars file is determined in the tfvars step and used here in the apply. Remember there are multiple jobs running concurrently in the matrix strategyAWS_SHARED_CREDENTIALS_FILE— this is needed because the usual path and home variables that allow AWS SDKs to load credentials are not automatically set in Github actions.

- name: Terraform Apply

if: steps.determine-command.outputs.do_apply == 'true'

uses: rewindio/terraform-github-actions@master

with:

tf_actions_version: ${{ env.TF_VERSION }}

tf_actions_subcommand: apply

tf_actions_comment: true

tf_actions_comment_url: ${{ steps.load-pr.outputs.comments_url }}

args: -var-file backend/backend.tfvars -var-file ${{ steps.tfvars.outputs.tfvars_file }}

env:

TF_WORKSPACE: ${{ env.WORKSPACE }}

AWS_SHARED_CREDENTIALS_FILE: .aws/credentials

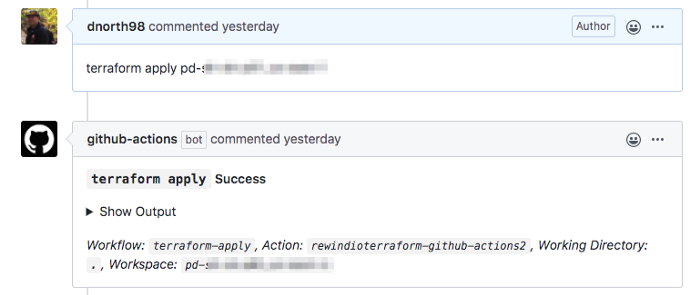

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}Here’s what the output looks like back to the pull request:

Bonus: Terraform Help Workflow

In the example repo, there’s a bonus workflow — tf-help.yml. Like the apply workflow, this responds to comments on a pull request — specifically terraform help. The steps to get the pull request details and checkout the code have been covered but here’s the step to output the help:

- name: Show Help

env:

COMMENTS_URL: ${{ steps.load-pr.outputs.comments_url }}

run: |

set -eu

echo "Sending help text to: $COMMENTS_URL"

helpPayload=$(cat .github/workflows/tf-help.md | jq -R --slurp '{body: .}')

resp=$(echo $helpPayload | curl -sSf

--url $COMMENTS_URL

--data @-

--header 'authorization: Bearer ${{ secrets.GITHUB_TOKEN }}'

--header 'content-type: application/json')

echo "Adding comment returned: $resp"- We read a markdown file containing the help and then format it into the json that Github expects for a comment. Essentially it just needs a bodyfield.

- Using curl, we send a GET to the comments URL. Where does this come from? This is determined in the same step that the SHA was determined to check out the code for the pull request branch. It comes in the incoming event. You can use this methodology to add a comment to any pull request (or issue!) from a workflow step.

Wrapping Up

Terraform is a powerful tool. Github actions are a powerful orchestration framework. Using the two together with the matrix job strategy has increased productivity significantly due to the parallelization of jobs. At the same time, because everything is driven by pull requests, we have a fully trackable and audible log of who has made what changes and when.

Dave North">

Dave North">